Game Theory and Team Dynamics: Why Your High Performers Undermine Each Other

Your sales team has top-tier talent. Each member could sell ice to Arctic researchers. Yet collectively, they're underperforming. Your engineering team is stacked with senior developers. But projects drag on, riddled with preventable errors because no one shared what they learned. Your analysts compete so fiercely for the "analyst of the year" award that they've stopped collaborating on cross-functional reports entirely.

The problem isn't your people. It's the game you've designed for them to play.

When Individual Rationality Produces Collective Stupidity

Game theory emerged from the Cold War not as abstract mathematics but as a framework for understanding strategic behavior when outcomes depend on what others do. A nuclear arms race made perfect sense for each superpower individually, build more weapons or fall behind, yet collectively produced mutual vulnerability. The same logic governs your high-performing teams.

Consider the Nash equilibrium, named for mathematician John Nash. It describes a situation where each player maximizes their outcome given what others are doing, and no one can improve their position by changing strategy alone. Critically, a Nash equilibrium isn't necessarily optimal for the group. It's simply stable, a trap where individual rationality locks everyone into a suboptimal outcome.

Your team dynamics likely contain multiple Nash equilibria. The question is whether you've designed incentives that push people toward productive equilibria or destructive ones.

The Knowledge-Sharing Game: A Prisoner's Dilemma

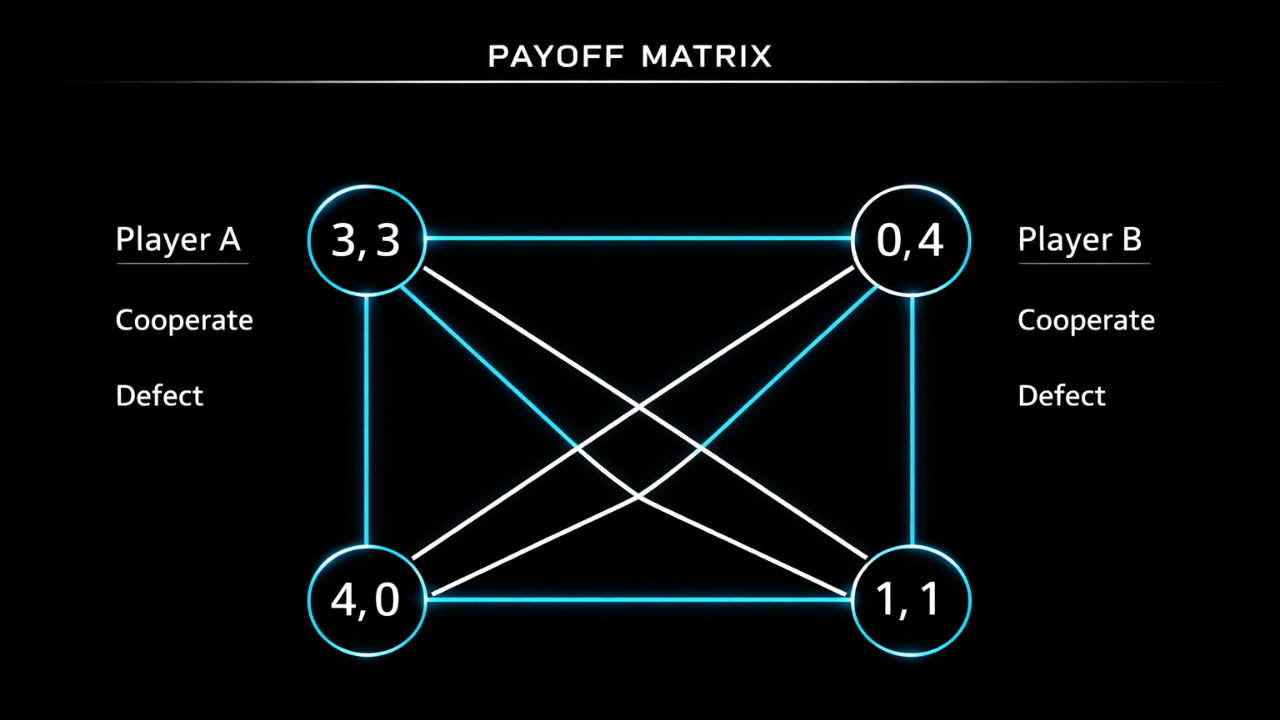

The prisoner's dilemma is game theory's most famous illustration of how individual incentives can betray collective interests. Two suspects are interrogated separately. If both stay silent, both get light sentences. If one betrays the other, the betrayer goes free while the other gets a harsh sentence. If both betray, both get moderate sentences.

The rational choice for each prisoner? Betray. Regardless of what the other does, betraying yields a better individual outcome. Yet both betraying produces a worse outcome than both staying silent.

Knowledge sharing in teams follows identical logic. Imagine two high performers working on related projects. Both would benefit if they shared insights, tools, and approaches. But sharing requires time and effort, and the benefits flow to both parties regardless of who shares. Worse, in zero-sum promotion systems, helping a colleague might mean they outshine you.

The individual calculus is clear:

If they share and you don't: You benefit from their knowledge while preserving your time and competitive advantage

If neither shares: You're even with them, no worse off

If you share and they don't: You've spent time helping a competitor

If both share: You both benefit, but you've spent time you could have used to pull ahead

The Nash equilibrium? Neither shares. Each person is maximizing their individual position given what they expect from the other. The result: duplicated effort, repeated mistakes, and slower collective progress. Everyone loses, but no one can improve by changing alone.

The tragedy isn't that people are selfish. It's that you've designed a game where selfishness is rational.

Zero-Sum Thinking When Promotions Are Limited

Corporate hierarchies create zero-sum games. There's one VP position. Three directors are qualified. Two won't get it.

This transforms collaboration into a strategic liability. Every project where you help a peer is a project where you've potentially helped them leapfrog you. Every insight you share is ammunition they might use to outshine you in the next executive review.

The rational response? Information hoarding. Risk aversion. Credit seeking. Behaviors that look like poor culture but are actually rational responses to the incentive structure you've created.

Finance teams exemplify this pathology. Analysts compete for limited senior positions. The smart ones hoard their Excel models, their data sources, their analytical approaches. When forced to collaborate, they share the minimum required. The result: every analyst rebuilds tools that already exist, basic errors propagate across teams, and the collective analytical capability of the organization never compounds.

One CFO described this as "reinventing the wheel 40 times per year, badly." The solution isn't to hire more collaborative people. It's to redesign the game.

Information Hoarding as Rational Strategy

When your value depends on unique knowledge, sharing that knowledge is existentially threatening. This is especially acute in technical roles where expertise is the primary source of leverage.

The senior engineer who's the only one who understands the legacy billing system has job security. Teaching others threatens that security. Yes, it creates a bus factor problem for the organization. But the organization's risk isn't the engineer's problem; it's management's problem for creating incentives that reward information monopolies.

The experienced sales rep who's figured out how to navigate procurement at major accounts has competitive advantage. Sharing that playbook helps competitors for the same promotion. The fact that sharing would lift team performance overall doesn't change the individual calculus.

This is mechanism design failure, not character flaw. You've created a game where the dominant strategy is to hoard information, then you're surprised when people play dominant strategies.

Credit-Seeking Behaviors and the Volunteer's Dilemma

The volunteer's dilemma is another game theory classic: a group task requires one person to bear a cost for everyone to benefit. If someone volunteers, everyone wins. If no one volunteers, everyone loses. But the best individual outcome is letting someone else volunteer while you free-ride on their effort.

This maps directly onto collaborative work. Someone needs to update the shared documentation. Someone needs to mentor the junior team member. Someone needs to coordinate with the adjacent department. These tasks benefit everyone, but they require time and effort from whoever does them.

The rational strategy? Wait for someone else to volunteer. If everyone plays this strategy, critical work doesn't get done. When it finally becomes urgent enough that someone must do it, resentment builds; the person who volunteers feels like a sucker for bearing costs others avoided.

The credit-seeking corollary is even more pernicious. In environments where promotions depend on visible individual achievements, people optimize for high-visibility projects over high-value contributions. Writing the press release gets more recognition than fixing the broken data pipeline. Presenting at the executive meeting gets more credit than mentoring the team that did the actual work.

Again, this isn't dysfunction; it's rational response to misaligned incentives. When the rewards system favors credit over contribution, you get credit-seeking behavior.

Escaping Bad Equilibria Through Mechanism Design

Game theory doesn't just diagnose problems; it provides a framework for designing better games.

The key insight: you can't change the Nash equilibrium by asking people to play differently. You have to change the game structure itself so that individual rationality aligns with collective goals.

Strategy 1: Team-Based Incentives Alongside Individual Metrics

If 80% of someone's bonus depends on individual performance and 20% on team performance, they'll optimize for individual performance. The 20% is too small to change behavior.

Flip the ratio. Make 60-70% of compensation tied to team outcomes. Suddenly, helping teammates becomes rational. Their success is your success. Information hoarding hurts you directly.

This requires rethinking performance management. Instead of stack-ranking individuals, evaluate teams collectively. Instead of promoting the top individual performer, promote the person who most elevated team performance.

Strategy 2: Forced Collaboration Through Project Structure

Don't make collaboration optional; make it mandatory through how you structure work.

Pair programming forces knowledge sharing because two people are writing code together. Rotating team leads ensures no one can build an information monopoly. Requiring sign-off from peers creates mutual accountability.

The sales team example: instead of individual territories, assign pairs to major accounts. Each person's commission depends on the pair's performance. Suddenly, knowledge sharing becomes rational; your success depends on your partner's capability.

Strategy 3: Rotate Team Membership to Prevent Defection

Repeated games change incentives. If you're playing the prisoner's dilemma once, betrayal is rational. If you're playing it repeatedly with the same person, cooperation becomes rational because today's betrayal means tomorrow's retaliation.

Rotating team membership creates repeated games across the organization. The person you refuse to help today might be tomorrow's project partner or manager. The person you hoard information from might control access to resources you need next quarter.

This doesn't require formal rotation programs. It means ensuring people work across enough different teams and projects that their reputation precedes them. Short-term defection stops being rational when it damages long-term relationships.

Strategy 4: Make Cooperation Measurable and Rewarded

You can't manage what you don't measure. If collaboration is important, measure it.

Track knowledge-sharing contributions: documentation written, mentoring hours, cross-team projects completed. Include peer reviews in promotion decisions, not just upward feedback. Make helping others a formal part of performance evaluation.

One company changed its promotion criteria to require evidence of "multiplying team effectiveness." Candidates had to document how they'd made their teammates better, not just their individual achievements. The result: information sharing increased because it became a requirement for advancement, not a competitive liability.

Strategy 5: Public Recognition for Helping Others

Recognition is a zero-sum game if you only recognize individual achievement. It becomes positive-sum if you recognize contributions to others' success.

Create awards for mentorship. Celebrate people who write documentation, fix shared tools, or coordinate cross-functional work. Make helping others visibly valued, not just tolerated.

This shifts the signaling game. Instead of competing to be seen as the individual star, people compete to be seen as the force multiplier. The equilibrium shifts from hoarding to sharing.

When Competition IS Optimal

The solution isn't always cooperation. Sometimes competition produces better outcomes than collaboration.

Simple, Independent Tasks

When work is straightforward and individual effort directly determines output, competition works well. Sales is the classic example; if territories are genuinely independent and each rep's success doesn't depend on others, individual incentives align with organizational goals.

The test: can someone succeed by optimizing only their own work, or does success require coordination? If the former, competitive incentives may be optimal.

Clear Individual Metrics

When you can measure individual contribution accurately and that contribution directly translates to organizational value, individual incentives work. Commission-based sales compensation makes sense because you can measure what each person sold.

The problem arises when individual metrics don't capture full value contribution: when someone's work enables others' success, when quality matters more than quantity, or when coordination costs are high.

Low Interdependence

Competition works when people aren't dependent on each other's work. Customer service representatives handling independent cases can be individually incentivized. Software engineers working on tightly coupled systems cannot.

The key is honest assessment of interdependence. Most organizations have more interdependence than their incentive structures acknowledge.

When Cooperation Is Critical

Complex, Interdependent Work

Modern knowledge work is rarely independent. Code repositories have dependencies. Financial models pull from shared data sources. Marketing campaigns require coordination across content, design, and analytics.

The more interdependent the work, the more individual incentives backfire. You need people to optimize for collective outcomes, which means making collective outcomes drive individual rewards.

Knowledge Sharing Essential

If organizational capability depends on distributing expertise, not concentrating it, you need cooperation incentives. The goal isn't creating individual experts; it's building collective expertise that compounds over time.

This is especially critical in technical domains where knowledge gaps create bottlenecks. The faster expertise spreads, the faster the organization moves.

Innovation Requires Collaboration

Innovation rarely comes from isolated genius. It emerges from combining perspectives, challenging assumptions, and building on each other's ideas. This requires psychological safety to share half-formed thoughts and incentives that reward contribution to innovation, not just claiming credit for it.

Teams that hoard information don't innovate; they iterate within their silos. Cross-pollination requires cooperation.

Designing Games Worth Playing

The executive's job isn't to demand better culture. It's to design better games.

Start by mapping the actual game structure:

What metrics drive compensation and promotion decisions?

How interdependent is the work?

What behaviors would a rational person pursue given current incentives?

What's the Nash equilibrium of your current system?

Then ask: is this the equilibrium you want? If high performers undermining each other is the stable state, you have a mechanism design problem, not a people problem.

The solution isn't better hiring. You can't hire your way out of bad incentive structures. The solution is redesigning the game so that individual rationality produces collective success.

Game theory won't tell you whether to prioritize competition or cooperation. But it will tell you whether your current system actually incentivizes the behaviors you claim to value. And it will reveal why asking people to "just collaborate more" fails when collaboration is individually irrational.

Your high performers aren't the problem. The game you've designed for them is.